-

Products -

Website & Course Building Build your website your way

-

Lead Generation Turn more visitors into leads

-

Conversion Rate Optimization Perfect every detail to maximize revenue

-

Get the Complete Suite Get all 9 plugins at a fraction of the price

-

-

Features -

Your desired outcome -

Features for success -

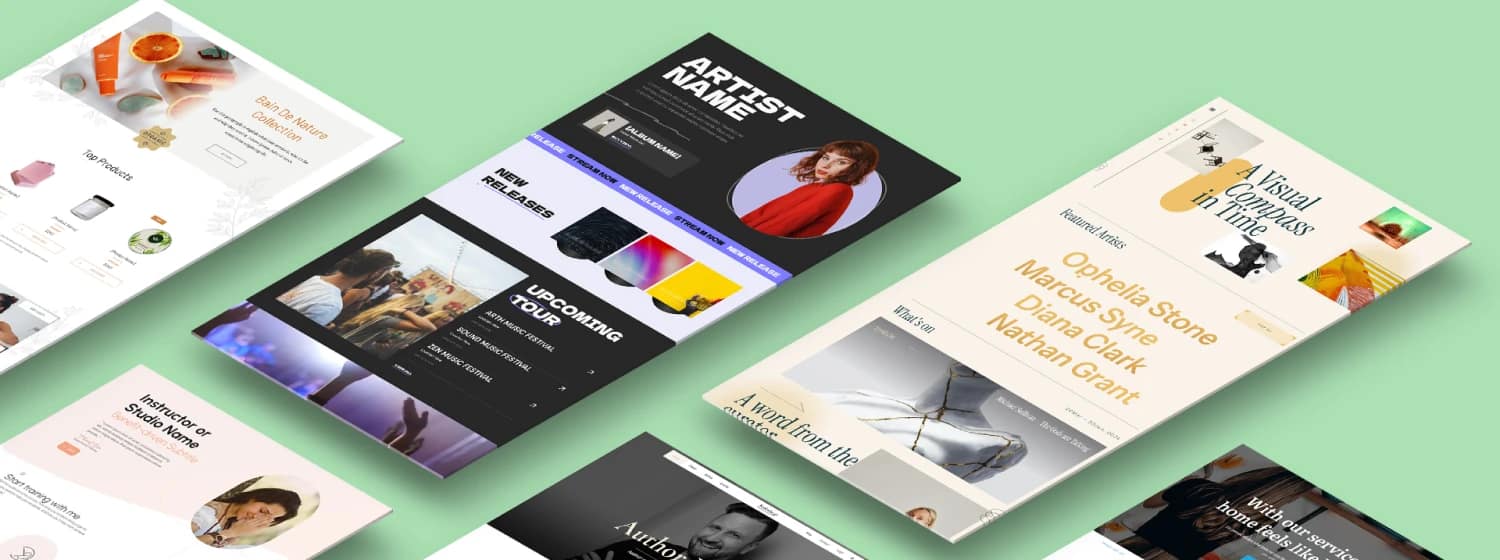

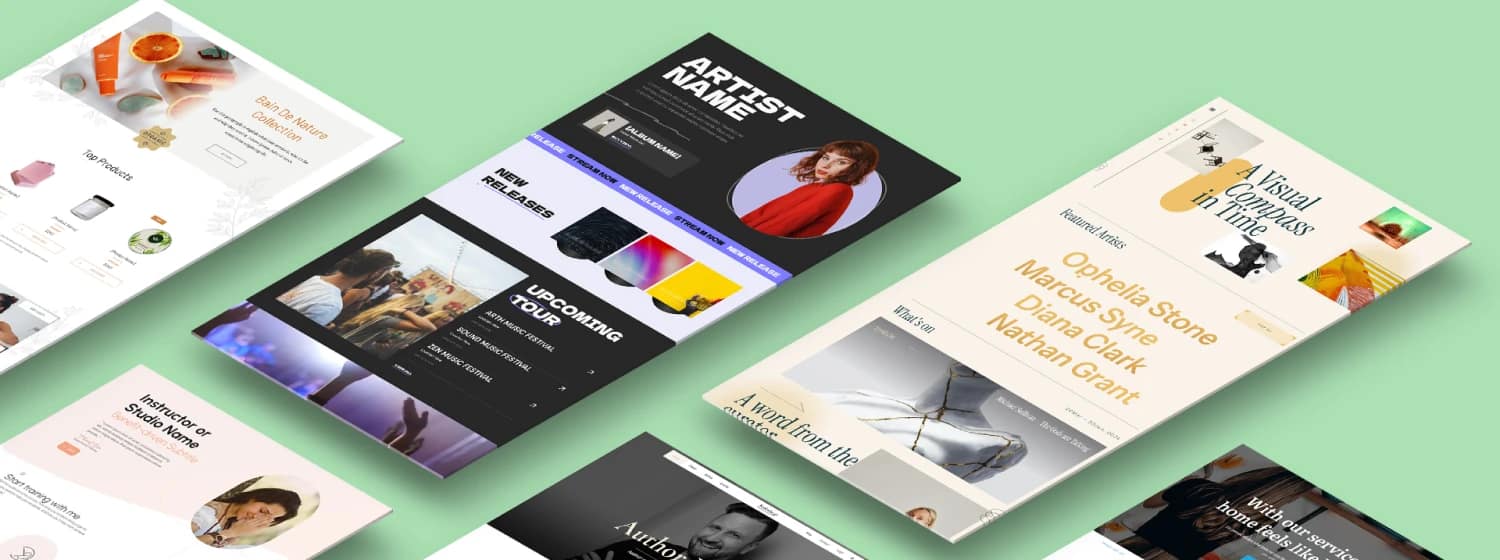

Templates Design quicker with conversion focused templates for pages, sections, forms, and more....

-

-

Resources -

Learn -

Help & Support -

Latest Release Discover the newest product and feature updates

-

-

Pro Services -

Done for You Website Building Get a perfectly optimized website built by experts just for you!

-

Site Speed Optimization Boost your user experience, SEO, and conversions by ensuring top website performance.

-

WordPress Maintenance Let us take care of important maintenance tasks like security, backups, and updates.

-

On-Demand WordPress Developers Enlist our designers and developers to help you with a project.

-

-

Pricing

-

Products -

Website & Course Building Build your website your way

-

Lead Generation Turn more visitors into leads

-

Conversion Rate Optimization Perfect every detail to maximize revenue

-

Get the Complete Suite Get all 9 plugins at a fraction of the price

-

-

Features -

Your desired outcome -

Features for success -

Templates Design quicker with conversion focused templates for pages, sections, forms, and more....

-

-

Resources -

Learn -

Help & Support -

Latest Release Discover the newest product and feature updates

-

-

Pro Services -

Done for You Website Building Get a perfectly optimized website built by experts just for you!

-

Site Speed Optimization Boost your user experience, SEO, and conversions by ensuring top website performance.

-

WordPress Maintenance Let us take care of important maintenance tasks like security, backups, and updates.

-

On-Demand WordPress Developers Enlist our designers and developers to help you with a project.

-

-

Pricing

Get access to this course and a ton of other courses that will help you build a successful online business for FREE!

Create your free account and start learning right away!

Password Reset

The instructions to reset your password are sent to the email address you provided. If you did not receive the email, please check your spam folder as well.

You are already logged in

Already have an account? Login