TL;DR: Unlock Your WordPress Site's Hidden Potential with A/B Testing

This article is your straightforward guide to A/B testing on WordPress. I'll show you how to stop guessing and start making data-driven decisions to boost your website's performance.

Here are the three big takeaways:

- A/B testing isn't just for big tech. It's a practical way for any WordPress site owner to understand what truly resonates with visitors and drives conversions.

- Planning beats guessing. A clear hypothesis, a single element to test, and enough time to gather data are your best friends for reliable results.

- The right tools make all the difference. I'll walk you through options, including our own Thrive Optimize, to help you pick a solution that fits your comfort level and goals.

Ready to turn your WordPress site into a conversion machine? Keep reading for the full breakdown.

I see a lot of smart business owners shy away from something genuinely powerful: A/B testing on WordPress. I get it. The idea of "split testing" or "conversion rate optimization" can sound like a dark art, full of complex statistics and coding wizardry. But the truth is, it doesn't have to be.

I'm here to cut through the noise and show you how to approach A/B testing in a way that feels practical, not intimidating. My goal is to help you understand what it is, why it matters for your WordPress site, and how you can start running your own tests to make smarter decisions about your website. We’ll cover everything from planning your first experiment to picking the right tools, and even how to make sense of the results. Think of it as having a quiet conversation over coffee about how to make your website work harder for you. No jargon, just clear steps and actionable advice for better WordPress conversion optimization.

If you're interested in the bigger picture of how all these pieces fit together, you should check out our guide on How to Create a Clean, Conversion-Focused WordPress Website.

Why Bother with A/B Testing Your WordPress Site?

I often hear people ask, "Do I really need to A/B test?" My answer is always a resounding yes.

It's not about making random changes; it's about making informed ones. If you're serious about turning more visitors into subscribers, customers, or clients, then you need to know what actually moves the needle. A/B testing is a core component of any effective strategy to improve WordPress conversions.

Imagine you have a landing page. You've put a lot of effort into it, but it's not quite performing the way you hoped. What do you change? The headline? The button color? The image? Without A/B testing, you're just guessing. You might make a change that actually hurts your conversions, and you'd never know.

A/B testing lets you compare two (or more) versions of an element – maybe a page, a form, or even just a headline – to see which one performs better with your actual visitors. It's like asking your audience directly, "Which one do you prefer?" but with data, not opinions.

How A/B Testing Helps Your Site

This helps you:

- Boost conversion rates: This is the big one. More sign-ups, more sales, more leads.

- Understand your audience: You learn what resonates with them, what catches their eye, and what makes them take action.

- Reduce guesswork: Stop making changes based on a hunch. Start making them based on evidence.

- Enhance user experience: Often, the version that converts better also provides a clearer, more enjoyable experience for your visitors.

Ultimately, A/B testing is how you build a website that isn't just pretty, but genuinely effective. It's one of the most powerful website optimization tools at your disposal.

Before You Start: Planning Your A/B Test

Jumping straight into testing without a plan is a bit like throwing spaghetti at the wall to see what sticks. It might work, but it's messy and inefficient. Before you touch any tools, I always suggest taking a moment to think strategically about your WordPress A/B testing strategy.

1. Define Your Goal and Hypothesis

Start with one clear question: what are you actually trying to improve?

I don't mean something vague like "better performance." I mean the specific action you want more people to take. More email sign-ups from your homepage? More purchases of a particular product? More clicks on your pricing page button? Write it down in one sentence.

Once you've nailed that, you need a hypothesis. This is your educated guess about what's getting in the way and how to fix it. Think of it as your "I think X is happening because Y, so if I change Z, I'll get better results" statement.

Here's what that looks like in practice:

Let's say your product page gets traffic but sales are flat. You suspect the headline is too vague—visitors land, glance around, and leave without understanding what you're selling. Your hypothesis might be: "Changing my headline from 'Premium WordPress Solutions' to 'WordPress sites that convert visitors into customers' will increase sales because people will immediately understand the benefit."

Or maybe you've noticed your call-to-action button gets ignored. You think it's blending into the background. Your hypothesis: "Switching my CTA button from gray to bright orange will get more clicks because it'll actually stand out against my blue page design."

The specificity matters. A weak hypothesis is "Red buttons perform better." A strong one explains why you think red will work for your particular page. That clarity keeps you from testing random changes and hoping something works.

2. Identify Your Key Metrics

How will you measure success? This usually ties directly to your goal. If your goal is more sign-ups, your key metric is the conversion rate of your sign-up form. If it's more sales, it's the conversion rate of your checkout page.

Don't get bogged down in too many metrics. Pick one primary metric that directly reflects your goal.

3. Choose What to Test (One Element at a Time)

This is important: test one significant change at a time. If you change the headline, the image, and the button color all at once, and your conversion rate goes up, you won't know which change caused the improvement.

Think about elements that have a direct impact on your goal. Here are some common ideas for WordPress conversion optimization:

- Headlines: The first thing people read.

- Call-to-Action (CTA) buttons: Text, color, size, placement.

- Images/Videos: Hero images, product shots, explainer videos.

- Page Layout: The order of sections, amount of text.

- Forms: Number of fields, field labels, placement.

- Pricing: How you present your pricing tiers.

- Social Proof: Testimonials, review counts, trust badges.

4. How Long Should Your A/B Test Run?

How long should your test run? This isn't a "set it and forget it for a day" kind of thing. You need enough traffic to reach what we call "statistical significance" – basically, enough data to be confident that your results aren't just random chance.

I generally recommend running tests for at least one to two full weeks. This helps account for day-of-the-week variations in traffic and behavior. If your site has lower traffic, you might need to run it for a month or even longer to gather enough data. Don't stop a test early just because one variation seems to be winning after a few days; that's a common mistake. Patience is a virtue in website optimization.

How Does A/B Testing Actually Work? (The Technical Side)

You load Version A of your landing page. Half your visitors see a green "Buy Now" button. The other half see an orange one. At the end of the week, you check which version got more clicks.

That's the human-readable version. Here's what actually happens under the hood:

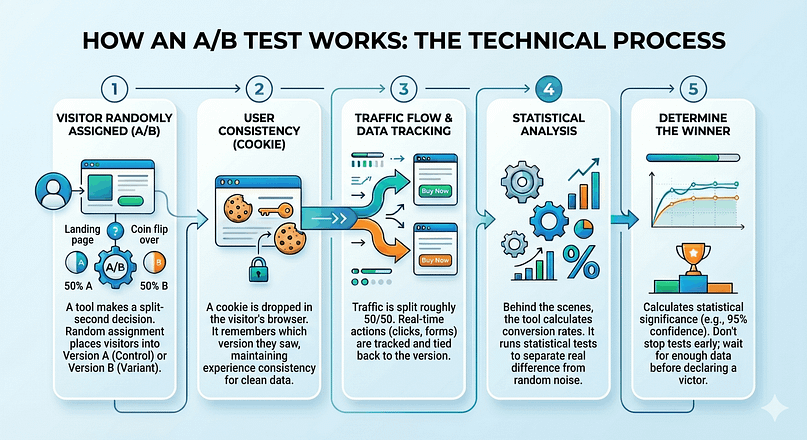

When someone lands on your page, WordPress (or your A/B testing tool) makes a split-second decision: which version should this person see? Most tools use random assignment — a coin flip, essentially. The visitor gets bucketed into either the control group (Version A) or the variant group (Version B).

Once that decision is made, the tool drops a cookie in their browser. This cookie remembers which version they saw, so if they come back tomorrow or click around your site, they keep seeing the same version. Consistency matters. If someone sees the green button on Monday and the orange button on Wednesday, your data turns into mush.

Now, traffic starts flowing. Version A gets shown to roughly 50% of visitors. Version B gets the other 50%. I say "roughly" because true randomization isn't perfectly even at small sample sizes. If you've only had 20 visitors, you might see a 12-8 split. That evens out as more people arrive.

While this is happening, the tool tracks actions. Did the visitor click the button? Fill out the form? Add something to their cart? Every meaningful action gets logged and tied back to the version they saw. This is where conversion tracking comes in — you need to tell your tool what counts as a "win."

Behind the scenes, the plugin is doing math. It's calculating conversion rates for each version (number of conversions divided by number of visitors). But it's also running statistical tests to figure out if the difference between A and B is real or just random noise. This is called statistical significance, and it's the reason you can't call a winner after 10 visitors.

Most tools use something called a chi-square test or a t-test. You don't need to understand the formulas. What matters is this: the test tells you how confident you can be that Version B actually performs better than Version A. If the test says "95% confidence," that means there's only a 5% chance the results happened by accident.

Here's where people trip up: they see Version B ahead by 2% after a day and declare victory. But statistical significance takes time. You need enough visitors and enough conversions before the numbers stabilize. A good A/B testing tool will show you a confidence meter or a "significance reached" indicator. Don't stop the test early, even if one version looks like it's winning. Let it run until you hit that threshold.

On WordPress specifically, how you run an A/B test depends on your setup. Some tools work at the page level — they create duplicate pages and split traffic between URLs. Others work at the element level — they load the same page but swap out specific elements (buttons, headlines, images) using JavaScript. Both approaches work. The page-level method is cleaner for SEO. The element-level method is faster to set up.

Either way, the mechanics are the same: random assignment, consistent experience per user, conversion tracking, statistical analysis. That's the engine. What you test and how you interpret the results? That's the art.

Which A/B Testing Tools Work Best for WordPress?

You've got three main routes for A/B testing on WordPress: plugins that live inside your page builder, standalone WordPress plugins, or external platforms that hook into your site. Each has its place depending on how you like to work and what you're already using.

I'm going to walk through the tools I've actually tested (or watched clients wrestle with). Some make split testing feel natural. Others... well, they technically work, but you'll spend more time fighting the interface than analyzing results.

The right tool depends on whether you want something visual and integrated, or if you prefer a separate analytics layer that sits on top of your existing setup. Neither approach is wrong — it's about what clicks with how you already build pages and think about data.

1. Thrive Optimize (with Thrive Architect)

Best For: Small businesses, solopreneurs, and marketers who want a fully integrated, visual A/B testing solution within WordPress.

I'm a big fan of Thrive Optimize because it's built directly into Thrive Architect, our visual page builder. This means you can create your page variations and set up your tests all from one interface, without jumping between different tools. It's designed to be straightforward, especially if you're already building your pages with Thrive Architect. This makes it a fantastic tool for WordPress conversion optimization.

Pros

Points to Consider

Price: Included with Thrive Architect ($199/year for the standalone product, or part of Thrive Suite).

2. Nelio A/B Testing

Best For: WordPress users looking for a dedicated, feature-rich A/B testing plugin that handles more than just pages.

Nelio A/B Testing is a solid option if you want a standalone plugin that offers a lot of flexibility. It lets you test pages, posts, custom post types, themes, widgets, and even CSS. It's a comprehensive tool for those who want to dig deeper into various elements of their WordPress site. It's definitely one of the more versatile website optimization tools available for WordPress.

Pros

Points to Consider

Price: Free version available; premium plans start around $30/month.

3. VWO (Visual Website Optimizer)

Best For: Larger organizations or businesses with significant traffic and a need for advanced testing capabilities beyond just A/B.

VWO is a powerful, enterprise-level optimization platform. While it's not WordPress-specific, it integrates with WordPress sites and offers a vast array of testing types (A/B, multivariate, split URL) along with heatmaps, session recordings, and personalization features. It's a serious tool for serious optimizers, but it might be overkill for smaller sites looking for simple WordPress conversion optimization.

Pros

Points to Consider

Price: Starts around $300/year, but varies based on traffic and features.

4. OptinMonster

Best For: Testing popups, lead generation forms, and other conversion elements specifically.

OptinMonster is primarily known for its lead generation capabilities, but it also includes strong A/B testing features for its campaigns. If your main focus is improving your popups, slide-ins, and other opt-in forms, this is a great choice. It's a specialized split testing plugin for WordPress if your goal is lead capture.

Pros

Points to Consider

Price: Starts around $10/month (billed annually).

5. Google Optimize (No Longer Available)

You might have heard of Google Optimize in the past. It was a popular free tool for A/B testing, but Google unfortunately retired it in late 2023. This is why it's so important to have reliable alternatives. If you were using it, you'll need to move to one of the other tools I've mentioned.

Free vs. Premium A/B Testing Tools: Which Do You Need?

I get asked this all the time: "Should I pay for an A/B testing tool, or can I get by with a free one?"

Fair question. The answer depends on what you're actually testing and how much traffic you're working with.

Free tools work fine when you're just getting started. You can run basic tests on headlines, button colors, or CTA placement without spending a cent. The catch? You'll hit limits fast — usually around the number of active tests, traffic caps, or access to features like multivariate testing.

Premium tools give you room to grow. You can run multiple tests at once, target specific user segments, and get deeper analytics that tell you why something worked, not just that it worked. If you're running a business where conversion rates directly impact revenue, the upgrade usually pays for itself.

Here's how the two stack up:

Free vs Premium A/B Testing Tools

Feature | Free Tools | Premium Tools |

|---|---|---|

Number of active tests | 1 - 2 at a time | Unlimited (or very high cap) |

Traffic Limits | Often capped at 1,000 - 5,000 visitors/month | No limits or much higher thresholds |

Test types | Basic A/B splits | A/B, multivariate, split URL, personalization |

Targeting Options | Limited (device, location) | Advanced (behavior, referral source, custom segments) |

Analytics Depth | Basic win/loss reporting | Heatmaps, session recordings, statistical confidence |

Support | Community forums, documentation | Priority support, onboarding, strategy calls |

Integration Options | WordPress + 1-2 email tools | Full ecosystem (CRM, analytics, email, e-commerce) |

If you're running a blog or a small site with modest traffic, start free. Tools like Nelio A/B Testing or the free tier of Google Optimize (while it was still around) give you enough to learn the basics and see if testing actually moves the needle for you.

But if you're running an online store, a membership site, or any business where a 1-2% lift in conversions means real money, go premium. You'll want the ability to test more aggressively, segment your audience, and dig into the data without hitting artificial caps.

One more thing: don't confuse "free" with "no learning curve." Even the simplest tools require some setup and a basic understanding of statistical significance. A premium tool won't magically make you smarter about testing — it just gives you more firepower once you know what you're doing.

Start where you are. Upgrade when the free version starts holding you back.

How to Run an A/B Test on Your WordPress Website (Step-By-Step with Thrive Optimize)

For this walkthrough, I'm going to show you how I typically set up an A/B test using Thrive Optimize. I find it's one of the most straightforward ways to get started with page-level testing directly within WordPress, making it simple to improve WordPress conversions.

1. Get Thrive Architect + Thrive Optimize

First things first, you'll need Thrive Architect installed and activated on your WordPress site. Thrive Optimize comes bundled with it, so you get both when you purchase Thrive Architect. If you're not already building your pages with Thrive Architect, this is a good opportunity to consider it, especially if you're looking for a visual page builder that's deeply integrated with conversion optimization tools.

You can grab Thrive Architect (and Thrive Optimize) as a standalone product or as part of our Thrive Suite bundle.

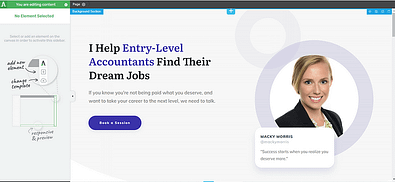

2. Launch a Page in Thrive Architect

You'll need a page to test. This can be an existing page you want to improve, or a brand new one.

Opening an Existing Page

Creating a New Page

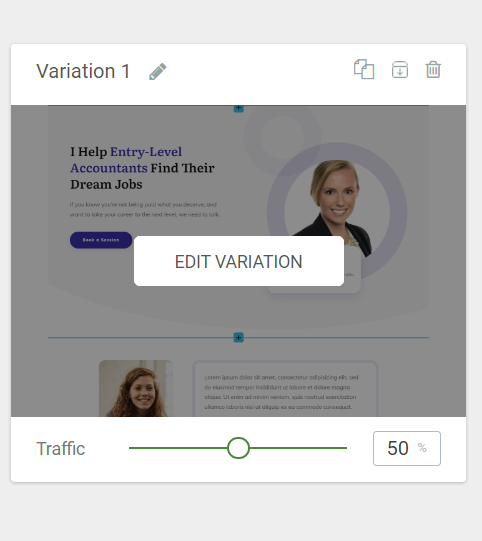

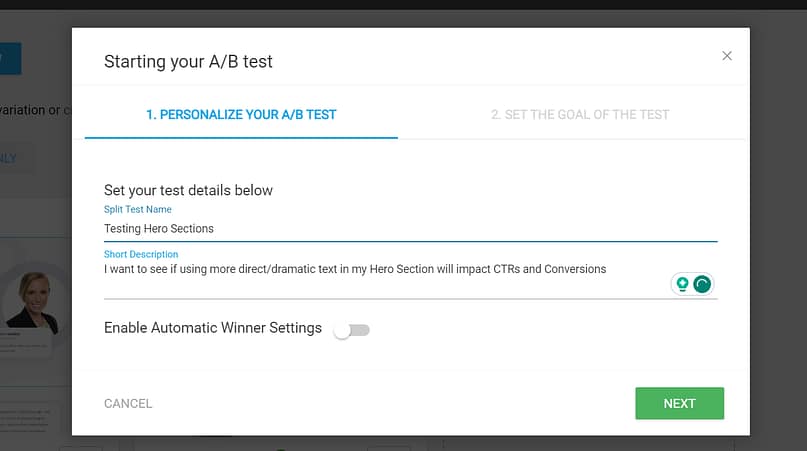

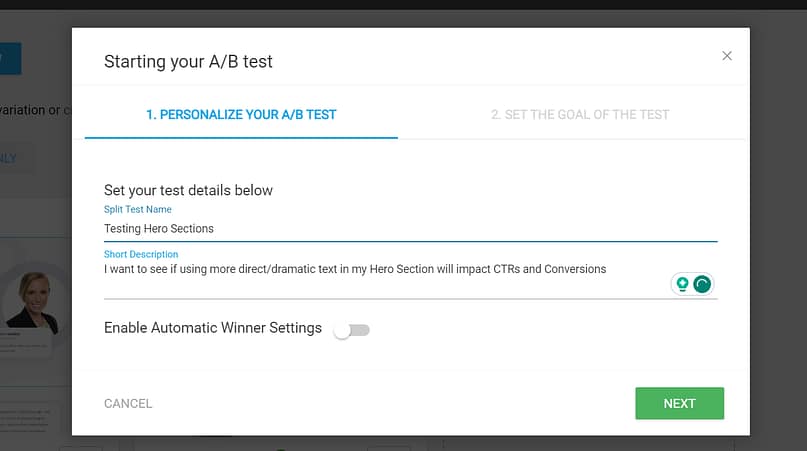

3. Create a New Test in Thrive Optimize

Once you're in the Thrive Architect editor for your control page:

4. Create Your Variation Page

Now for the fun part: creating the version you want to test against your control.

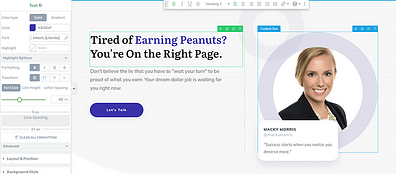

5. Edit Your Page Variation in Thrive Architect

This is where you make the specific change you decided on during your planning phase.

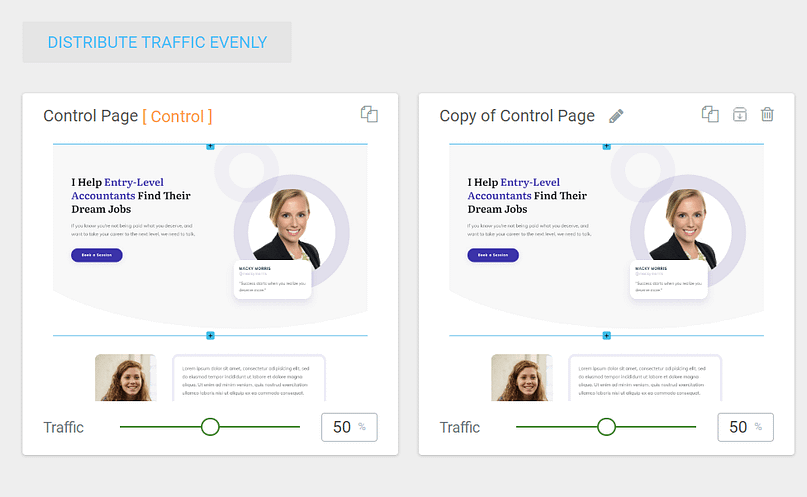

6. Configure Your Traffic Settings

Back in the Thrive Optimize dashboard, you'll see your control and your variation(s).

7. Set Up Your Test

In the top right corner of the Thrive Optimize dashboard, click Set Up and Start A/B Test.

8. Set the Goal of the Test

The next screen asks you to define your test goal. Thrive Optimize offers a few common options:

Choose the goal that aligns with your hypothesis and key metric.

9. Start Your Test!

Once you've set your goal type, click Start A/B Test.

You'll be redirected back to the WordPress editor, where you'll see an overview of your active test at the bottom of the screen. And just like that, your first A/B test is running!

What Elements Should You A/B Test on Your WordPress Site?

The beauty of A/B testing is that you can test almost anything. But to get the most impact, I suggest focusing on elements that directly influence user behavior and your business goals. Here are some common ideas that often yield interesting results for WordPress conversion optimization:

High-Impact Elements to A/B Test

Element Type | Test Ideas |

|---|---|

Headlines | The main headline, sub-headlines, section titles. |

Call-to-Action (CTA) elements | Text: "Buy Now" vs. "Get Started" vs. "Learn More" |

Images & Videos | Hero images: People vs. product, different angles. |

Page Layout & Design | Overall structure: Long-form vs. short-form sales pages. |

Forms | Number of fields: Shorter forms often convert better. |

Social Proof | Testimonial placement and quantity. |

Pricing Tables | Highlighting a "most popular" plan. |

Navigation | Simpler menus vs. more detailed ones. |

My advice? Start with elements that are highly visible or directly connected to your main conversion goal. Sometimes, a seemingly small tweak, like adding testimonials, can lead to a significant jump in conversion rates – I've seen it happen.

Measuring Success: Understanding Your A/B Test Results

Once your test has run its course and collected enough data, it's time to look at the results. This is where you move from experimentation to informed decision-making. This is the payoff for all your website optimization efforts.

What is Statistical Significance in A/B Testing?

This is a key concept. Statistical significance tells you how likely it is that the observed difference between your control and variation is real, and not just due to random chance. When you set your "Chance to beat original" to 90% or 95%, you're saying, "I want to be at least 90-95% confident that the winning variation genuinely performs better."

If your test reaches this threshold, you can trust the results. If it doesn't, even if one variation looks better, it might not be a reliable winner. This is why running tests for long enough and having enough traffic is so important.

Interpreting the Data

Most A/B testing tools will clearly show you which variation performed better based on your chosen goal. Look for:

- Conversion Rate: The percentage of visitors who completed your goal.

- Improvement: How much better (or worse) the variation performed compared to the control.

- Statistical Significance: The confidence level of the results.

What to Do with a Winner

If you have a clear winner that has reached statistical significance:

- Set up the winner: Make the winning variation your new default page or element.

- Document your findings: Keep a record of what you tested, your hypothesis, the results, and why you think it won. This builds a knowledge base for future tests.

- Start a new test: Optimization is an ongoing process. Once you've added a winner, there's always something else you can test to improve further.

What to Do with No Clear Winner

Sometimes, neither variation performs significantly better than the other. This isn't a failure; it's still a learning opportunity.

Common Pitfalls to Avoid

- Stopping too early: As I mentioned, resist the urge to stop a test just because one variant seems to be ahead. You need statistical significance.

- Testing too many things at once: Stick to one primary change per test.

- Ignoring external factors: Major holidays, marketing campaigns, or even server issues can skew results. Be aware of what else is happening during your test.

- Not having enough traffic: If your site gets very little traffic, it will take a long time to reach statistical significance, or you might never get there. Focus on driving traffic first if this is the case.

Best Practices for Successful A/B Testing

To make sure your A/B testing efforts are actually productive, I've gathered a few best practices I always keep in mind for effective WordPress conversion optimization:

A/B Testing Best Practices Checklist

- Always have a hypothesis: Don't just test randomly. Have a clear idea of why you think a change will work.

- Test one element at a time: This helps you isolate the impact of each change.

- Focus on high-impact areas: Start with elements that are important to your conversion goals (e.g., headlines, CTAs, forms).

- Run tests long enough: Aim for at least 1-2 weeks, or until you reach statistical significance, to account for traffic fluctuations.

- Don't forget mobile: Make sure your variations look and function well on all devices. Most of your traffic is likely coming from mobile, so test there too.

- Document everything: Keep a log of your tests, hypotheses, results, and learnings. This prevents you from repeating tests and helps you build a deeper understanding of your audience.

- Continuously test: Optimization isn't a one-time project; it's an ongoing process. There's always something you can improve.

- Consider qualitative data: While A/B tests give you numbers, tools like heatmaps or user recordings can show you why people are behaving a certain way. This can inform your next test ideas.

Frequently Asked Questions About A/B Testing on WordPress

Here are some common questions I hear about A/B testing on WordPress, answered directly to help you get started with confidence.

Improve Your WordPress Site for More Conversions

A/B testing is a powerful tool, but it's part of a bigger picture. If your website isn't designed with conversions in mind from the start, even the best A/B tests will only get you so far. You need a solid foundation.

If your pages just aren't converting, or if you're struggling to create the kinds of pages you want to test, it might be time to look at your core tools. I often recommend Thrive Suite because it provides a complete toolkit for building conversion-focused WordPress websites, from page builders to lead generation tools and, of course, A/B testing with Thrive Optimize.

It's about having the right tools in your hands so you can focus on the strategy, not the technical hurdles. Start testing, start learning, and watch your WordPress site become the conversion machine you always knew it could be.