No, that isn’t a clickbait title... it’s the real deal.

We really did instantly boost our sales page conversion rate by a staggering 25% with one simple change. This isn’t a sensational claim built around a rogue sales spike either – it’s a consistent and reliable increase in daily sales with rock solid proof.

Want to know which secret sauce we added?

Want to know how you can do the same?

Of course you do!

Let’s get started...

More...

Here at Thrive Themes, we’re fanatical about building conversion-focused websites.

Read any of our posts or watch our videos, and you’ll hear us harping on about testing every element of your sales pages: the headline, copywriting, layout, pricing, calls-to-action and pretty much anything else that can be swapped out and analyzed.

Why?

Because it works.

And because you have all the tools you need within Thrive Suite

The exact same tools that we used to improve sales by 25%.

Enough chit-chat. Let’s break down how we set up the sales page A/B test, and then deep dive into the results.

What is an A/B Test?

By comparing the conversion rate of slightly different versions of the same sales page, you can identify the winning version and improve future sales performance.

We call these versions A and B, or the Control and the Test.

No matter how many variants you’re testing at the same time, it’s still just called an A/B test.

The Opportunity

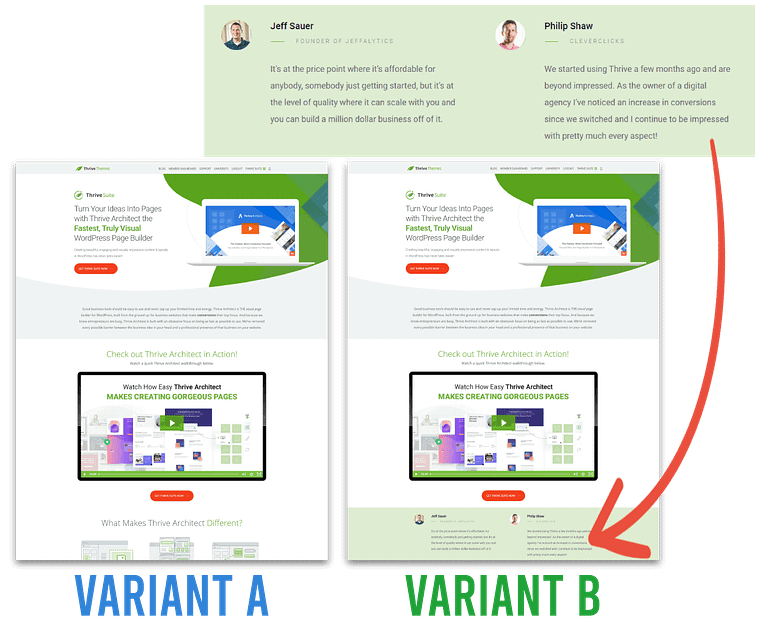

We’re always talking about the power of using testimonials as social proof for your sales pages. They let your happy customers share positive experiences and results, which – in theory – should encourage new visitors to buy your product.

So we were a little embarrassed to discover we’d actually forgotten to add testimonials to our Thrive Architect sales page!

With so much historical conversion rate data, this was a perfect opportunity for an A/B test... both to improve our revenue AND to share the results on the blog so you can improve your website too.

Here’s the question we set out to answer...

“Will adding testimonials to an existing sales page have a noticeable impact on conversion rate?”

Setting up the A/B Test

To get started with the test, we used Thrive Optimize, our powerful WordPress plugin for A/B testing landing pages. I won’t go through the technical setup here, as you can find all that on our guide: Create Your First A/B Test Using Thrive Optimize.

We sent 50% of traffic to the original sales page, and 50% to the new variant with added testimonials.

The only difference between the pages was the presence of two testimonials.

Our original Thrive Architect sales page converted at about 2.2%, pretty standard for a public sales page with a variety of traffic sources. We were hoping to see the new variant produce a consistent improvement over 2.2%.

Rather than check in constantly, we enabled the Automatic Winner Settings – this automatically disables the losing variant once your A/B test has collected enough data and confidence to reliably know the winning page is a long-term winner.

... And then we let the test run for 6 weeks.

The Results

Drum roll, please....

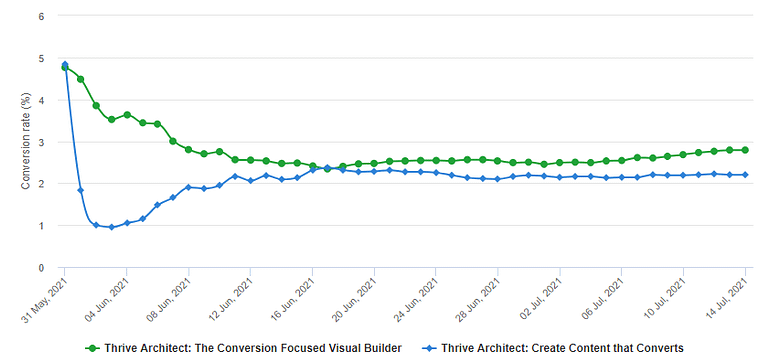

After 6 weeks of equal visitor traffic, the sales page A/B test results looked like this:

Header | Sales page A (no testimonials) | Sales page B (with testimonials) | Difference |

|---|---|---|---|

Traffic | 10,398 | 9,663 | Cell |

Sales | 161 | 182 | +13% |

Revenue | 48% | 52% | +8.3% |

Revenue per Visitor | - | - | +19.6% |

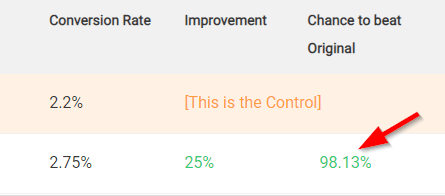

Conversion Rate | 2.2% | 2.75% | +25% |

Every important metric saw a significant improvement thanks to the addition of a few testimonials!

Here’s the daily conversion rate of the control (blue) and test (green) variants:

All A/B tests need time to collect data before they show a consistent pattern.

How do we know this 25% improvement came from the testimonials?

Aside from adding the 2 testimonials under the video, we made no other changes to the sales page over the 6 weeks.

The page variants were served to a random 50-50 distribution of visitors, meaning that seasonality and other external factors affected both landing page variants equally.

How confident are we that the winning variant will consistently perform better?

Oh we’re extremely confident.

The data shows a 98.13% chance that the testimonial variant will beat the original variant.

A confidence score of greater than 95% means we can be fairly certain that the winning variant will continue to outperform the original, even with more visitors, sales and time.

You can safely choose winners when the your confidence scores are greater than 95%

Why was the conversion rate for both variants significantly higher at the start?

The first few days of an A/B test are always volatile. Randomness, return visitors and other oddities usually produce erratic spikes and valleys. You always need some time to get through that initial randomness.

No matter how much traffic you’re getting, we always recommend ‘setting and forgetting’ your A/B tests. Come back to them after a couple of weeks (2 weeks being the minimum to limit randomness based on the day of the week) so you’re not trying to make important business decisions without enough data to produce consistent results.

Why is there such a big difference in conversion rate during the first week?

This is the classic pattern of many conversion rate A/B tests!

The first few weeks are a wild ride (see above), and it’s very tempting to make important decisions based on early results.

Resist this temptation!

As more data is collected, most A/B tests start to converge, with variant performance getting closer and closer together. Eventually they’ll either merge (meaning there’s no discernable difference) or one variant will maintain a slight lead (meaning you’ve just increased your conversion rate!).

Thrive’s Golden Rules of A/B Testing

I bet you’re feeling the urge to run some A/B tests of your own.

It’s easy with Thrive Optimize (which we used to run our tests) but there’s also some important ‘rules’ that help to avoid the common pitfalls that first-time A/B testers learn the hard way.

- 1Set it and forget it... resist the urge to make decisions before you have enough data

- 2Test Bigger Changes First. bigger, more obvious changes will improve your conversion rate faster (and with more confidence) than smaller, cosmetic changes.

- 3Try to obtain a 90% or higher confidence score before choosing a winner. Greater than 95% is best.

- 4Change NOTHING else during the test.

- 5Don’t run ads or other campaigns to the variants during the test, unless they will run consistently. Changes in traffic sources and quality will affect your conversion rate.

- 6A/B testing is an iterative process... Once you’ve identified a clear winner, test something else.

- 7Save your time and sanity by enabling automatic winner selection.

Ready to Run Your First Sales Page A/B Test?

Split testing your sales page doesn’t have to be complicated, and you absolutely do not need to redesign massive sections to see consistent improvements in conversion rate.

We added TWO testimonials and boosted our conversion rate by 25%!

Here’s what I want you to do: post your sales page in the comments below, and the Thrive team will offer suggestions on what you could easily A/B test.

It’s a great chance to show everyone your website and get valuable feedback from the conversion rate experts here at Thrive.

here’s my copy of the sales page: http://www.mijnvoedselintolerantietest.nl/voedselintolerantietest-on-line-kopen

looking forward to your suggestions.

Johan

Hi Johan,

Here are the things I would test:

– Simplify the above the fold! there’s A LOT going on there and it’s very busy. The menu and the CTA button are competing for attention. If this is your main sales pages. Think about removing the header or moving it to the bottom of the page.

– The main CTA button leads to a not very attractive overview of your products eventhough there is a clearer overview just beneath it. I would consider removing the text right after “Wat is de beste test voor mij” or I would make the jumlink go to the column overview instead of the title and the text.

– I would switch the “direct bestellen” and “lees meer” importance of the buttons. At the moment, it’s more appealing to click on the “read more” than it is on the “buy now” which is probably the opposite of what you want 🙂

In our context the conversion happens outside the wordpress instance in our own subscription management. User get there by clicking on the CTA. We can’t use any of the three types of goals implemented in Thrive.

Interesting use case. Can you share more about your funnel, so we can perhaps suggest an alternative solution?

We use Thrive as our landingpage tool, but the CTAs lead to our application where the subscription-management (=conversion) is managed. So basically the only kind of conversion that happens in Thrive is a click on a button that leads to a external domain.

With all other things equal (not changing the checkout page or checkout flow) testing the click on the button would still be a useful A/B test to run.

The more people click on the buy now –> the more people would go through the full checkout.

You won’t be able to see the revenue but if all the rest of the funnels stays equal, you should still be able to increase sales 🙂

Here’s my sales page:

https://relievingthatpain.com/stretching-blueprint/

I look forward to hearing what you think!

Hey Stephen. Your intro video is great!

* Test disabling the free mini course popup on the sales page. It’s a big distraction from the main CTA of purchasing your paid guide.

* Test a gallery of testimonials, maybe 2 rows of 3, with photos of real customers, so your visitors can see social proof and feel reassured.

* Test leading with your visitor’s big pain points as a prominent styled list under the video. e.g.

Does any of this sound familiar?

– I feel so inflexible regardless of what I do

– I don’t feel like I can stretch properly

– I just don’t believe that stretching is actually helping

etc.

Then this is the perfect guide for you!

Thank you so much. As a newbie to A/B testing I was wondering hope to get started.

Is it worth doing if your product is new and you don’t regularly get 100s of sales yet?

My page is here

https://ifilovedmyself.com/course-for-practitioners/

Neat course Eilat! I love your landing page! FYI on your sales page the newsletter sign up still has the lorem ipsum text. It’s probably a setting in your theme builder that you have not updated.

Adam thank you so much!! I didn’t notice the Loren ipsum!! I’ll go change that.

And thanks for the kind words of affirmation.

Great video that really speaks to the internal dialog and struggles so many people have.

Ideas for A/B tests:

* Test moving the enrolment date countdown to the very top, maybe even making it sticky so it’s always visible with a CTA button.

* Test adding a few of the most powerful testimonials to earlier in the page.

* Test a radically different headline. Once you have a winner, test another headline. Repeat!

Thank you!!! This is super helpful and I will try them all. One at a time of course, as your article suggests. I so appreciate your feedback.

I LOVE how to-the-point this is with step-by-step “if you want to do A/B tests, follow these rules” — shows just how much you care about helping your customers succeed 🙂

I’m glad it shows, Christina. The more our customers succeed, the more people become Thrive Themes customers… it’s a win-win situation.

Hi David and Thrive-Team,

Thank you for that blog! I‘d appreciate very much your comments on fertighaus-webinar.de

Thank you in advance & best regards, Guido

Great headline. It’s super focused and suggests there are big pitfalls to avoid for the uneducated when buying a prefab house.

Here’s what I would test:

* Test improving the readability and contrast throughout the sales page. Now you have thin grey text on a white background, so visitors may feel overwhelmed or lost… you’re asking your writing to do a lot of heavy lifting where other visual elements could really help.

* Test making the 3 benefits much more prominent. I would suggest a 3-column element with styled content boxes. Shout about the benefits of your webinar with confidence.

* Test some screenshots from previous webinars, so people know what to expect. Show a sense of community and social proof with other attendees in the screenshots.

Great post!

Thank you! I’m glad you found it useful.

This is such a great post! I’ve been considering dabbling in A/B testing my sales pages but was hesitant since I didn’t know where to start. This was perfect.

Here’s my sales page: https://thrivingscribes.com/author-platform-content/

I look forward to the feedback!

Thanks Brit! Let’s take a look at your website…

I LOVE that you’ve included a video at the top. And the animated GIF! 🙂

* Test a different style of video. Right now you have a narrated tour of the platform, so maybe try a more promotional version. Something like “You’re a writer, and writers WRITE. But I bet you also know that successful authors do so much more. You also need to manage your online brand and platform to reach new readers and sell more books. Here’s how the Author Platform Content Toolkit can help you do just that, so you can focused on writing, publishing, and growing your readership…”

* Test moving the prompts 3-column section higher. It’s a tangible benefit that’s easy to understand.

* Test moving your payment form to a second page, so people must enter a minimal funnel without distractions.

Thank you so much! Will definitely begin testing these out. Appreciate it.

Assuming you intented to have a 50% / 50% split, a Sample Ratio Mismatch (SRM) check indicates there might be a problem with your distribution.

I didn’t expect to see SRM discussed here, that’s a great addition to the discussion!

For those reading who are unfamiliar with SRM, it’s where the actual split of visitors doesn’t exactly match the intended split (in this case 50/50), and how that difference can be a sign of something gone wrong in the test methodolgy or technology.

Thrive Optimize is pretty clever with how it distributes A/B test visitors, and we see reliable increases in sales/revenue as a result. But I’ll definitely ask our technical team to share their thoughts on how the visits are split between the variants. Thanks Vincent!

Thank you for your great article. I definitely have to try out the A/B-testing much more.

Here is my sales page:

https://www.babyschlummerland.de/schlummerkoenig/

Excited to hear your thoughts about it.

Sarah

What an interesting niche, Sarah. Thanks for sharing.

I notice your jump links “Ja, Will Ich!” are not working, and your pricing table only shows the ‘normalpreis’ with strikethought (no actual prices). I would fix these regardless of A/B testing first.

A/B test suggestions…

* Test moving the countdown timer to the top, and consider making it sticky.

* Test adding customer photos to the testimonials, so your visitors can feel a connection with real mums like them.

* Test adding a short video to the top. Perhaps testimonial snippets interspersed with sleeping babies.

Thank you SO much for this opportunity, David! Our sales page is related to our Christian weight loss membership program here: https://takebackyourtemple.com/program

Lovely video to lead with and social proof with the publication logos. I also love the Thrive Apprentice screenshot! I think there’s a ton of opportunity to make this sales page work better for you and your audience.

* Test breaking up the page into clear sections with different background colors or separators. Right now, it’s a continuous page of text some people can find overwhelming and confusing.

* Test a row of 3 short but powerful testimonial snippets directly under the first ‘Become a Member’ button. Remember most people will only scroll a few times before deciding to continue (or leave!) so try to test different elements early that appeal to different people.

* Test an alternative conversion funnel. Right now, clicking your ‘Become a Member’ button takes the visitor to another sales page (your confirmation page) where they have to scroll down and click another button. This is sure to limit your conversions.

Thank you so much, David! These recommendations make sense and will test them. God bless you 🙂

Thanks so much for the tip David ! I will do more A/B tests now.

Here is my sale page (in french, hopefuly it’s nevertheless ok to receive some feedback):

https://simplementrebelle.com/confiance44/

Merci ! Claire

I love the energy on this sales page, Claire. C’est génial 🙂

OK, A/B suggestions…

* Test making your 2-button header sticky so it follows you as you scroll. Once it’s out of view, I count 16 mouse wheel scrolls before I see another CTA.

* Test a short video right at the top, including some powerful, emotional testimonials from past clients.

* Test a new layout of your benefits under the heading “VOICI CONFIANCE44, LA FORMATION…” Right now, these are simple text, so it’s possible visitors can skim past without reading. I would consider a 2 rows of 3 columns, with nicely designed content boxes for each.

Thank you David for your advice! I will apply definitely these 3 features to my sales page. All the best, Claire

Hi.

Yes. Testimonials fo help.

Here is my sales page, let me know what you think.

https://www.listbuildingclick.com

I think what this page is missing is proof. Real screenshots, numbers, perhaps a chart of growth over time.

Right now, you’re making sensational claims without any grounded evidence. Without proof, it might come across as unbelievable to visitors.

So I’d strongly recommend A/B testing your sales page with more prominent testimonials, numbers, a chart, or even videos/tweets of happy customers.

Nice post, David. Ah, testimonials! Yes, so critical for conversions, that I have a whole bunch of them on my website at https://S3MediaVault.com . But would still like to get some feedback about what else I could split test.

* Definitely test a benefit-focused headline. S3MediaVault doesn’t convey any benefit to the visitor. I realise this is your homepage however, so consider changing S3MediaVault as a text-based logo instead, so you can lead with a compelling headline.

* Test a shorter cut of your intro video. Maybe you can re-cut a 3 minute version instead of 14 minutes.

* Test much earlier CTAs or buy buttons. It’s clear your passionate about how your produce works and what it can do, but give people an easy way to buy if they’re already convinced.

Thanks David. I’ve used the A/B test in the past and we weren’t having enough conversions at that time to make it work. Any advice on A/B testing when you aren’t having many conversions? Also testing takes time, what order would you test things (headline, buttons, sections, etc.)?

Here’s my current page. Just used one of your templates and filled it out, still needs lots of work. Thanks for the input!

https://www.soundbalancept.com/unsteady-to-ready-8-week-self-paced-balance-course/

Great question. A/B tests need to collect enough data to be confident your decisions are based on a reliable, consistent pattern. So I recommend leaving your A/B tests to run for much longer.

The issue you may run into with low traffic/conversions, is that you can’t make ANY changes to the test page, funnel or traffic sources while running an A/B test… so this might not be viable if you need to run it over a number of months.

Also, avoid running too many tests at the same time, as you’ll be splitting your traffic and making the problem worse.

Instead, consider running a ‘set it and forget it’ A/B test for a long time on something that you’re sure will stay the same, such as your about page or contact page.

Then you can focus on more active methods of increasing traffic in the meantime, until you have enough to run shorter sales page A/B tests.

Here’s some suggestions:

* Test flipping your header section, so you’re leading with the audience-focused questions in a larger font, before you state the course name.

* Test a sticky CTA banner that follows you as you scroll. Your page is quite long and the first buy button doesn’t appear until over half way down.

* Consider the average age (older) and objections (price, scam?) of your audience. How can you make your page less reliant on them reading all the text and more focused on alleviating their fears right at the start? Maybe testimonials, or a short intro video, or even your guarantee.

https://we-simplified.com/mastery

Thanks Trish. Here’s my suggestions…

* Test replacing the solid blue header with a gorgeous full-width image that speaks to your audience, with the headline in a solid white block to stand out.

* Test simplifying the payment options. It’s a little confusing for the first-time visitor with so many dollar amounts, so it’s not immediately clear what the price is.

* If you have one available, test a short video at the top of the page.

Thank you

Excellent post! It makes me think about testing so many elements in my site.

It’d be nice if you guys could check my sales page: https://www.sinjania.com/curso-de-novela

Thanks

Hi Eduardo,

Glad to hear!

Full disclosure, my Spanish is limited so I used Google translate to get by 🙂

Things I would test:

– Replace the title which is now the name of the program with the benefits people will get from taking the course. You’re hinting it a bit in the tagline but you can go further. What’s the main problem your program is solving? Make that your title.

– I had to scroll all the way down to understand this was a video course, the image does not do the course justice. I would replace the image with something like this: https://share.getcloudapp.com/jku4L6wg You can load one of the landing pages on a new page and simple save this image as a template and insert it on your page. (no photo editing tools needed 😀 )

– The page is very text heavy. Try breaking it up a bit. We call these “illustrated lists” you can see on the landing page blocks what we mean by those. It’s a nice way to break up a wall of text with elements like icons, column layouts and images.

Let us know how that goes!

I’d love to know how I can improve my conversion rate here: https://timbaderonline.com/4-steps-prophecy-school/

I’d like to do more A/B testing of my pages, particularly my sales pages but I don’t really get enough traffic (even bearing in mind the bowling ball vs feather analogy).

The above page only gets an average of 25 – 30 visits per month, so even if I have a 2% conversion rate, then an automated test will take forever.

What can we do to test pages like this in those kinds of low traffic situations? In other words, how can I get from “occasional sales” to regular sales, every month/week/day?

Hi Tim,

I wrote a post about A/B testing low traffic websites: https://thrivethemes.com/ab-test-low-traffic/

The main advice if you only get a couple of visits you your sales page is “what’s higher up the funnel”.

Eg.: do you have an opt-in form that then pitches your sales page? In which case you can start by A/B testing your opt-in form.

Suggestions for the sales page:

– Add a benefit to the title. You can find the benefits of the program by adding “so that you can” or “this will allow you to” to your main headline (which is more feature based ATM) So “Prophesy With Confidence, Today!” So that you can/which will allow you to… what comes after is the real benefit of your product.

– The product images are still the stock images. Having real product images of the inside of your product will make it more “real” (you can click on the computer screen images, they are actually content boxes and you can simply replace them with your own screenshots)

– When you scroll, there’s a ribbon on the page announcing a 20% discount but the pricing table doesn’t mention this which is confusing and will for sure turn off buyers. On the page, this section is right under the screen representation of the course and if you don’t want it you can simply delete or change it from there: https://share.getcloudapp.com/7KuEPXjA

I would definitely change the light grey body font to black or 80% black. No idea WHY people on so many sites are using light grey font color for the body THAT IS SO HARD TO READ…especially for older folks.

Hi Paul, thanks for your feedback, much appreciated.

I didn’t think the grey text was that light but it’s obviously causing an issue for you.

I will consider how best to make it more readable!

Thanks for this post! I actually had an amazing increase in lead inquiries once I put testimonials on my Contact page as well! (I wish I had done an official A/B test on it, but the increase was significant and noticeable.)

Here is my homepage as this is the landing page my ads go to: https://vanessanicole.com/

I’d love to get your feedback on what I could A/B test. 🙂

Thanks!

Hi Vanessa, that’s great for the contact page! And sometimes you don’t need an A/B test to know the results were there 🙂

For the page you shared I would test:

– A headline that gives the benefits for the giver instead of the receiver (if the one giving the ring is the target for this sales page)

– I’d add a section on “what will happen next” explaining the process to alleviate doubt and fear.

– I’dd add a section to highlight your guarantee (now it’s fairly small bullet point while it is a REALLY strong guarantee)

Thank you so much!! 🙂 Great ideas to test!!

Kindly,

Vanessa

Wow, thank you for offering ideas on what to test!

Here is our sales page: https://www.swingliteracy.com/ddp-bootcamp-teacher-track

What would you recommend?

Hi Andrew, nice work on your sales page!

Here’s some A/B test ideas:

– Right now the first thing that draws my attention above the fold is the text that says “Swing Literacy” and “Bootcamp Teacher Track”. Try making de-emphasizing those elements by making them smaller or moving them outside of the main content box. Try using a benefit-driven headline in place of it. (You may need to test a few different headlines)

– Congrats on having lots of fantastic testimonials! You could try breaking up that large section of testimonials into 3 or 4 testimonial sections, and introduce your best testimonials closer to the top of the page.

– I didn’t see a section explaining any kind of guarantee. You might want to try adding a guarantee section, if you offer one.

– If you have the time and equipment to make a sales video, you may want to add a video above the fold introducing yourself, the challenges your program solves, etc.

Thank you!

I would love to hear your feedback on my sales page.

This is my sales page: https://workoutable.com/hand-portion-and-macro-guide/

Hi Arun,

Nice use of the consultant landing page set!

What I would test:

– Remove the notification about accepting browser notifications, it takes away from the purpose of the page

– Add the price on the page. Now, a lot of people might click just to know the price and you can do any price anchoring because you’re “hiding” the price.

– Your title makes it look like this is something individual, the rest of the page is talking about a “guide” which makes me believe it’s not personalized ==> as a confused reader I wouldn’t buy. Try clarifying the offer personalized advice or guide? If personalized, how will you get my information? Explain the exact steps a customer would take.

Hey David,

i used one of your Architect Templates and changed design/text like i wanted.

Some of the original Template-Text and -Pictures are still there and have to be changed by me.

What do you think?

https://www.biohacking-chris.de/morgenroutine/

Its and online course about creating a great morning routine.

Looking forward to your answer.

Wow, great post, I always knew testimonials had an effect, but did not realise it was this much. Will place them more prominently on my website now.

Also, the fact you offer advice on possible split testing is great. My website homepage is https://www.clilmedia.com/, currently I have no sales pages online (no promotions going on). Would love to hear what you think!

You guys never cease to amaze me with valuable content and ideas, thanks!

Thank you so much for writing this guide to be honest, I have actually implemented the keypoints you mentioned on my landing page am surely gonna come over here back and share if it anyways boosted my conversion rate like it boosted as yours, Thank you for writing such curated information. Loved it <3

Great! thank you for suggesting ideas on what to test!

https://gatikedu.com/

I love this. Thanks!

staging.MyDIYhealth.com/DIY-lymph-massage

I was thinking of testing the offer first – a discount for completing a 3-question marketing insights survey vs a Buy-One-Give-One (to a person/charity in need) offer. What else could you suggest testing?

Such a helpful article. I’m super looking forward to your suggestions!

https://awishformorewishes.com

I’d LOVE it if Thrive provided a way to split test based on link or button click. As it is, I can only run an A/B test if the following page is on the same site.