Looking for the ultimate website optimization checklist to transform your website from good to amazing in 2025?

You’ve come to the right place.

Nowadays, just having a website isn’t enough. You need to deliver a top-notch experience to visitors, optimizing every element to ensure peak performance, maximum engagement – and more conversions.

But here's the catch: a subpar website can do more harm than good. It can push potential customers away, harm your brand's reputation, and ultimately, hamper your digital marketing strategies.

That's exactly why we've crafted the 'Ultimate Website Optimization Checklist for 2025'. It's your comprehensive guide to elevate your website’s performance and user experience.

Keep reading to discover an easy breakdown of how to transform your website into a memorable (and profitable) online experience.

How Optimizing Your Website Can Boost Your Online Success

The goal of your website is to communicate your brand’s message, engage with your target audience, and drive conversions.

This is your digital hub, where visitors come to learn about your products and services, and why they should buy from your business.

Every part of your website needs to work towards these objectives – from design to content, functionality, and user experience.

Your website also needs to be favored by search engines, so your target audience can find it when they look up search queries related to your business.

Now, if you’re a business owner with limited time or technical experience, trying to optimize your website on your own could feel like a big, overwhelming task.

Where do you start? How do you start?

Not to worry.

We’ve broken down the core tasks of website optimization into easy, actionable subtasks you can execute on your own.

Website Optimization Strategies: Let’s Dive In

This guide includes key tips and website optimization tools you should use to improve your site.

Key Essentials Before We Start:

Choose a Reliable Hosting Provider

A huge chunk of your site’s performance and security will depend on the hosting provider you choose.

A good host ensures fast loading times, minimal downtime, and robust security, which are all essential for a positive user experience and search engine rankings. And a bad host? The total opposite – which will cost you a lot of money in the long run.

When selecting a reliable hosting provider pay attention to speed, uptime guarantee, customer support availability, security features, and scalability.

Use WordPress as Your Content Management System

There’s a common misconception that WordPress is hard to use for beginners, and non-techie users should opt for hosted website builders like Wix, Webflow, Squarespace, or Weebly instead.

But WordPress is actually known for its simple usability and has far more benefits than any of the hosted site builders out there.

Its learning curve is minimal and you don’t need to be an expert in HTML or CSS to build an impressive WordPress site.

You can use a wide variety of templates and plugins to create a business website, blog, professional portfolio, or any other site you’d like.

WordPress is also written using high-quality code, making it easier for WordPress websites to be discovered by Google and other search engines.

So, if you’re looking to achieve the ultimate website optimization and stand out from the competition – you need to use WordPress.

And now that we’ve got that out of the way, let’s dive into this checklist:

1. User Experience (UX) Optimization

User experience determines how your website visitors interact with your website. Good UX makes a website easy and enjoyable to navigate, encouraging your audience to stay longer and engage more.

This increases user satisfaction, reduces bounce rates, and also strengthens your brand’s reputation – leading to more conversions.

Also, search engines favor websites with good UX, which can lead to better SEO rankings, more organic traffic, and more customers.

The key components of a good user experience are:

Navigation Ease

Straightforward, seamless navigation is the cornerstone of good UX. A well-structured navigation system helps your visitors find what they’re looking for quickly and without hassle.

Key elements of navigation ease include:

A clear, consistent main menu (usually a dropdown menu) that serves as the roadmap for your website

Logical, well-thought-out page organization that creates a natural, intuitive flow

Search bar accessibility (in the header, footer, and/or sidebar of the website)

Well-organized content that can be navigated intuitively

Clean Website Layout and Design

Your website’s layout and design are foundational to a good user experience.

A visually pleasing design, combined with a clear, straightforward layout, makes your website engaging and easy to use.

Your site layout and design should feature:

A consistent color scheme

High-quality images & videos

Readable fonts

Clear call-to-action sections

Whitespace usage

Consistent branding elements (logos, motifs, etc.)

Now, if you’re not well-versed in web design, you’ll struggle to build a website like this from scratch.

But with the right tools, you won’t even have to.

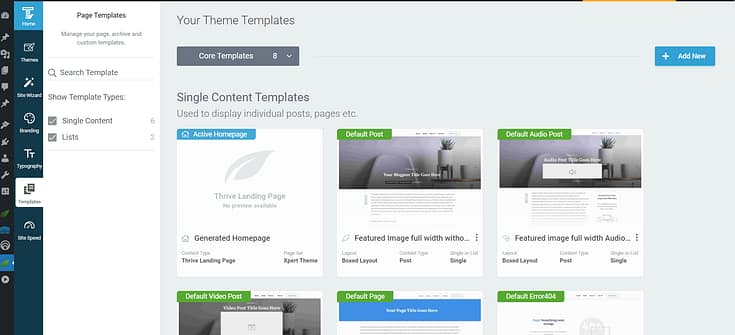

Thrive Theme Builder + Thrive Architect are the no-code, website building duo you need to build a clean, conversion-focused website your audience will love.

Thrive Theme Builder helps you create a great-looking site layout, along with the necessary core page templates you’ll need (homepage, blog page layout, default blog post design, 404 page, etc.)

Once you’ve set up your theme, you’ll use Thrive Architect’s user-friendly drag-and-drop visual editor to customize your page templates to your exact liking.

Choose from hundreds of landing page templates to add to your site and use our library of design elements to make them unique to your business.

Mobile Friendliness

In a world where our phones and tablets have almost become an extension of ourselves, having a mobile-friendly website is non-negotiable.

When a visitor accesses your site through their mobile device, your site’s content and layout should adjust automatically, allowing for easy navigation and an enjoyable experience.

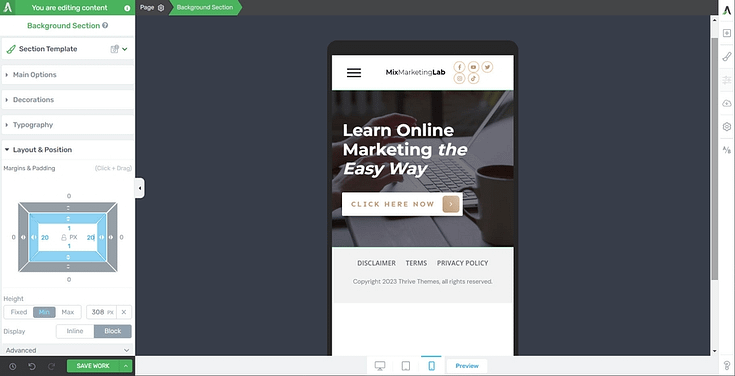

If you’re using Thrive Architect, you can optimize your pages and create a mobile version of your website in seconds.

Thrive Architect features a built-in “Responsive Design” feature that lets you manage the display of certain elements on the Desktop, Tablet, or Smartphone interfaces.

And if you want to learn more key tips to build a responsive website, watch this tutorial from Tony:

Page Loading Speed

Websites that load quickly meet user expectations for efficiency and immediacy.

Slow-loading pages can lead to frustration and high bounce rates, as users are likely to leave a site that doesn’t load promptly.

To avoid a slow-loading website, you should:

Optimize your images and videos

Ensure none of your plugins are outdated

Use a theme that is built with clean code and is updated regularly

Install a site speed plugin (or tools found in Google Search Console) for additional assistance

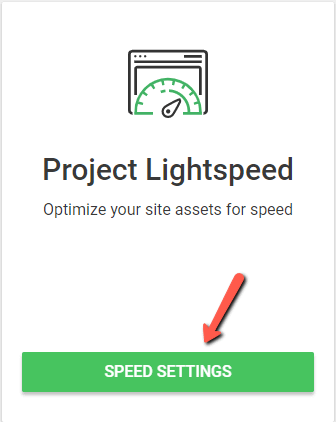

Thrive Theme Builder also includes a Website Speed Optimization tool to ensure your website loads fast.

This feature is automatically activated on your website, so you don't need to do anything here.

Our site performance optimization tools are simple to understand and straightforward to use, so you can complete this setup in less than a few minutes.

2. SEO (Search Engine Optimization)

Your well-designed website won’t be effective if your target audience can’t find it.

SEO is important because, when done right, it increases your site’s visibility and boosts your chances of ranking higher on search engine results pages (SERPs).

And it isn’t just about getting more eyes on your sight. It’s about attracting the right kind of traffic – people who are genuinely interested in what you have to offer.

On-page SEO Checklist: Optimize Your Content and Structure

On-Page SEO is critical for optimizing your website's content to help search engine

crawlers (and algorithms) and actual searchers.

Use SEO tools like WPBeginner Keyword Generator, Google Keyword Planner, or Ahrefs to start your keyword research & find relevant terms for your content.

Make sure every piece of content has a unique title tag that includes the main keyword. Keep it under 60 characters.

Write an attention-grabbing meta description (130 to 150 characters), for every post and page, that includes the main keyword.

Use headings (H1, H2, H3, etc.) to structure your content. Include your main keyword in your H1 header to highlight your page’s main topic.

Use short paragraphs and bullet points to make your content easy to read and scan. Avoid using long-winding sentences, as well.

Describe the contents of your images using alt text, and use keywords where necessary (don’t forget to compress images for faster page load speeds)

Link to other relevant posts or pages on your site (internal linking) and use anchor text that accurately describes the linked page's content

Keep your post and page’s URLs simple – include the target keyword when you can

Off-page SEO

While on-page SEO is about optimizing the content on your website, off-page

SEO strategy focuses on what happens outside of your site to improve your brand awareness, rankings, and authority.

Build quality backlinks (link building) through guest posting, collaborating with other creators in your industry, and identifying broken links on other websites (and offering your content as a replacement)

Regularly post engaging high-quality content on social media platforms and link back to relevant posts on your website

Work on your Local SEO if you have a physical business:

Create a Google Business Profile (formerly known as Google My Business) and keep it updated with accurate information and regular posts

List your business in relevant local directories

Respond to all your reviews on platforms like Google, Yelp, Amazon, etc. – both good and bad. This shows search engines you value your customers’ business and feedback.

Technical SEO

Technical SEO deals with the more technical aspects that impact your site's performance in search engines. This is about ensuring that search engines can easily crawl, understand, and index your site.

- Make sure your website has logical structured data then this won’t be an issue

- Use tools like Google PageSpeed Insights or GTMetrix to optimize your site’s loading speed. Alternatively, a site speed plugin can assist with this too.

- Secure your website with an SSL certificate. This encrypts data transmitted between your server and visitors' browsers, which is a factor that search engines consider for ranking.

- Create and submit a sitemap to search engines (Google, Bing Webmaster Tools, etc.) to help them crawl and index your site more effectively.

- Add schema markup to your site to get rich snippets in Google searches

Other important tasks include using robots.txt files wisely, optimizing your site’s core web vitals , checking for crawl errors, using heatmaps, and conducting regular technical SEO audits.

If you’re handling your site’s SEO on your own, this could become very time-consuming in no time. Especially if you aren’t knowledgeable on the ins and outs of SEO.

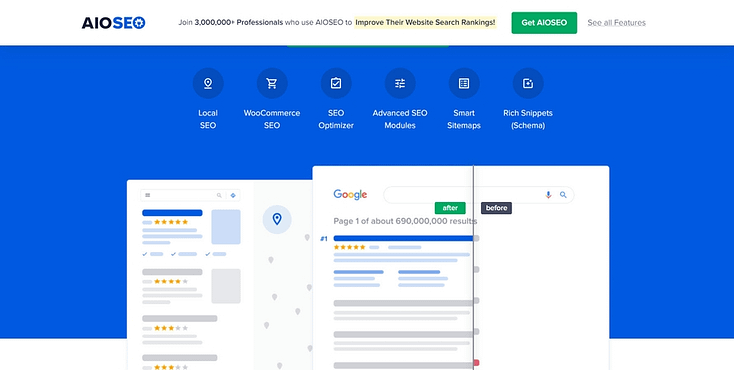

To make your job 100 times easier, we recommend using a WordPress SEO plugin like All in One SEO, Yoast SEO, or Moz to optimize your website for search engines.

Our top recommendation is All in One SEO:

AIOSEO handles all the technical aspects of optimizing your website and provides key tips on how to make your content SEO-friendly.

This plugin is easy to install, even easier to use, and will help you get your target audience in front of the right eyes, so you can convert them into leads and customers.

3. Content Optimization

Next up is your actual site content.

This is more than just filling your website with text. You need to focus on creating high-quality, relevant content that resonates with your audience and makes them want to stay on your website to learn more.

Keyword Usage

Integrate relevant keywords naturally into your content marketing.

This includes using them in titles, subheadings, and throughout the body. But remember, it's not just about keyword quantity; relevance and context are key.

Try use of long-tail keywords (with mid to low search volume) over shorter generic terms to to ensure your content matches your audience’s search intent.

Regular Content Updates

Keep your content fresh and up-to-date.

This shows search engines that your site is active and provides current information, which is an important ranking factor.

Regular updates also give visitors a reason to come back and trust that your content is recent and relevant.

Multimedia Elements

Break up text with high-quality images, videos, and infographics.

Multimedia elements can make your content more engaging and shareable, offering a richer user experience.

Plus, they can help explain complex topics in a more digestible format.

If you’re building your business on a budget, consider creating your own website images with affordable tools.

Always resize and optimize your images to avoid hampering your site’s load speed.

Readability and Content Structure

Your content should be easy to read.

Use short paragraphs, bullet points, and subheadings to break up text. Ensure that the font size and style are comfortable for reading on various devices.

A well-structured article not only keeps readers on the page longer but also makes it easier for search engines to understand your content.

Make sure your pages and posts have the same design, to maintain design consistency. If you’re using Thrive Theme Builder and Thrive Architect on your site, this won’t be a problem.

Templates in Thrive Architect

One of your first steps when setting up your website is to select sitewide templates – e.g. default post and default WordPress page. When you make changes to these templates (e.g. color, font style, or size) these changes will automatically reflect on all existing posts and pages.

4. Conversion Rate Optimization (CRO)

CRO is all about tweaking your website to make it more likely for your visitors to do what you want them to do - be it buying a product, signing up for a newsletter, or filling out a contact form. It's crucial for turning the traffic you already have into real results for your business.

Clear Call-to-Action Buttons

Your CTA buttons need to pop off the page and make it crystal clear what you want your visitors to do next.

Phrases like 'Buy Now,' 'Sign Up,' or 'Learn More' should be front and center, begging for a click.

If you struggle with CTA section placement, we recommend using a landing page builder with templates.

Thrive Architect features hundreds of landing page templates built with core CRO principles in mind.

Example of a CTA section from a Thrive Architect template

Instead of worrying about where to place what, all you need to do is replace the placeholder texts and images with your page copy, visuals, and links to your product pages, pricing pages, or content.

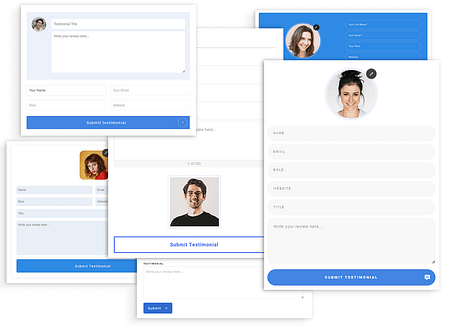

User-Friendly Forms

The easier your forms are to fill out, the more likely they'll get completed.

Keep them simple, ask only for what’s necessary, and make sure each field is clearly labeled.

If you've got a multi-step form, showing a progress bar can encourage completion.

You can take things a step further by implementing advanced targeting techniques. This means enabling your forms to appear on certain pages, to ensure they appear in front of the right audience.

To do this, you’ll need a reliable form-building plugin.

Thrive Leads, our lead-generation form plugin, features advanced targeting and a library of 400+ templates you can use to create attention-grabbing forms and popups that generate conversions.

You can purchase this plugin separately, or with Thrive Theme Builder, Thrive Architect and 7 other premium plugins when you purchase Thrive Suite, the ultimate WordPress plugin bundle.

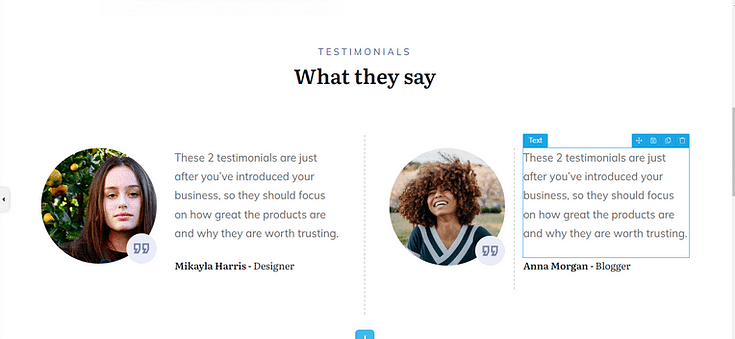

Trust Signals

Build trust instantly by displaying testimonials, user reviews, and any certifications right where new visitors can see them.

This kind of social proof reassures them that they're making the right choice by choosing you.

Like CTA sections, sometimes it’s hard to identify where to place testimonials and other social proof.

But all our landing page templates in Thrive Architect come with pre-placed testimonial sections, to make the design process much easier for you.

Social proof section in Thrive Architect

Scarcity Marketing Elements

Use countdown timers or notes about limited availability to create a sense of urgency. It's about making your visitors feel they need to act now or risk missing out.

Thrive Ultimatum, our scarcity-marketing plugin, helps you create targeted countdown timers to get your audience to convert quickly. You also get access to this plugin when you purchase Thrive Suite.

A/B Testing for Different Website Elements

Regularly test different elements of your site, like headlines, CTAs, or images, against each other to see which ones perform better.

A/B testing gives you hard data on what's working and what's not, allowing you to continuously refine and enhance the user experience and conversion potential.

If you’ve purchased Thrive Architect, you also get access to Thrive Optimize, a user-friendly A/B testing plugin you can get started within minutes.

5. Security Optimization

In a time where online threats have become more sophisticated, ensuring your website's security is non-negotiable.

Your site’s security focuses on defending sensitive data from malicious attacks and maintaining the integrity of your online presence.

When site visitors feel confident about their safety on your site, trust grows, which is fundamental for customer loyalty and retention.

In addition to using a security plugin to scan for malware and vulnerabilities, your site will also require the following:

SSL Certificates

An SSL (Secure Sockets Layer) certificate encrypts the data exchanged between your website and its visitors. It's a must for safeguarding personal information.

You can spot an SSL-secured website by the 'https' and padlock icon in the browser address bar – a sign that builds instant trust.

Example of a secure URL

Typically, an SSL certificate should be provided by your hosting provider. If not, then it might be time to consider a new host.

Regular Software Updates

Whether it's your website's CMS, theme, plugins, or scripts, keeping them up to date is crucial.

Updates often include security patches that protect against newly discovered vulnerabilities.

In WordPress, you can manually update your theme and plugin or enable automatic updates.

Secure Password Policies

Encourage strong password practices for your website’s admin and user accounts.

You should also enable multi-factor authentication for an added layer of security.

Regular Site Backups

Regular backups of your website are a safety net.

In the event of a security breach or data loss, having up-to-date backups means you can restore your site quickly and with minimal damage. You’ll need a backup plugin to make this happen easily.

Duplicator is your best bet. This plugin makes it easy to duplicate content on your website (pages, posts, etc.), back them up and migrate your website (if necessary).

6. Analytics and Monitoring

Web analytics offer invaluable insights into how users interact with your site.

From which pages they visit to how long they stay and where they drop off, these insights allow you to tailor your website to better meet user needs and achieve your business objectives.

In essence, analytics turn guesswork into targeted strategy, enabling continuous improvement of your site’s performance and user experience.

Setting Up Google Analytics

As a starting point, set up Google Analytics on your website. It's a powerful, free tool that provides a wealth of data about your website's traffic, user behavior, and much more

The best way to set up analytics on your website is to use a WordPress analytics plugin.

We recommend using MonsterInsights.

Known for its outstanding ease of use and powerful feature set, this plugin makes it super simple for WordPress site owners to integrate Google Analytics with their sites – without needing to deal with any code.

Once installed, the MonsterInsights plugin offers a comprehensive, user-friendly dashboard directly within your WordPress dashboard, allowing you to view complex analytics data in a clear, easily understandable way.

This powerful tool also offers a wide range of in-depth tracking features – putting it far ahead of its competitors.

You can use this plugin to observe real-time stats, e-commerce tracking, form submissions, and custom dimensions tracking.

Whether you’re a WordPress beginner or an advanced user, MonsterInsights will help you make the right kind of data-driven decisions without struggling with Google Analytics' native interface.

Best Practices

Make it a habit to regularly check your analytics.

Consistent monitoring helps you spot trends, identify issues, and recognize successful elements of your site.

Key metrics to focus on include:

Traffic Sources: Understand where your visitors are coming from – organic search, social media, direct visits, or referral sites.

User Engagement: Metrics like average session duration, pages per session, and bounce rate give you an idea of how engaging your content is.

Conversion Rates: Track how well your site achieves its goals, whether it's making sales, generating leads, or encouraging sign-ups.

Page Performance: Identify which pages are performing well and which aren't. Look at metrics like pageviews, time on page, and exit rates.

Mobile Performance: With the increasing importance of mobile, monitor how your site performs on mobile devices versus desktop.

Next Steps: Audit Your Website

Now you know how to optimize your website, it’s time to review your web pages and identify the areas that need to be improved.

Here are four additional tutorials to help optimize your site’s design, SEO, and marketing:

Web Optimization Checklist: Final Words

And there you have it! Now you have everything you need to improve your website and deliver a seamless, enjoyable experience to your visitors.

These tips will also help you turn more of those visitors into leads and paying customers.

Bookmark this post and refer it regularly to ensure you’ve covered all important parts of your website.

And if you’re looking for new tools to overhaul your website and start the year on a better note, then you should definitely consider Thrive Suite.

Each plugin in this bundle is designed to enhance your WordPress site and turn it into an engaging, conversion-generating machine.

Filled with templates, drag-and-drop functionality, and user-friendly interfaces, you’ll have a new and improved site in no time.

But don’t just take our word for it: